Loading VIs

| |

This article or section needs to be wikified to meet LabVIEW Wiki's quality standards. Please help improve this article with relevant internal links. |

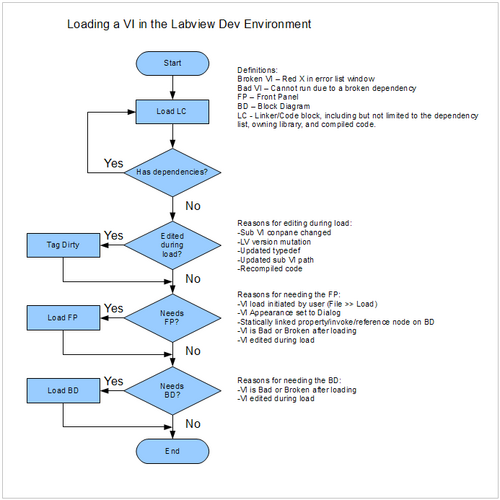

Flowchart

This is a simplified flow chart showing LabVIEW's decision tree when loading a vi into memory from disk.

Note this diagram applies to LabVIEW 2010 when compiled code is not separated from source code. Other versions may be slightly different.

Discussion

Aristos Queue

I'm going to try to explain some details of loading a VI. What happens during load depends upon what happened when the VI was last saved, so let's start there.

A good VI is one that has an unbroken run arrow. A broken VI is one that has a red X next to its name in the Error List Window because that VI itself has some problem. A bad VI is one that is listed in the Error List Window because some other VI/library that it depends upon is broken. There's a special case involving typedefs, poly VIs, and global VIs that are in the middle of being edited but are at the moment valid typedefs (i.e., they would not be broken if user did Apply Changes). Such VIs have the pencil icon next to their name in the Error List Window. Those VIs are good, but VIs that use them are bad.

If a VI was good, then we can compile the VI, meaning we can generate the assembly instructions for that VI to run. If a VI is good, when we save, we compile the VI, and we save the compiled code as part of the VI.

If a VI was broken or bad then we can't compile it, so when we save such a VI, we obviously can't save the compiled code. However, a VI might have been good, been compiled, and then become bad without any changes to its own block diagram. In that case, we already have the compiled code for the VI, and so we'll save that with the VI. There's also the special case of a VI that is broken only because it is missing subVIs. We may already have compiled code for such a VI, and so we'll keep that code around if we are asked to save again. (In the case of typedefs, they do have compiled code. They carry the instructions for copying and comparing their data. PolyVIs have no code. I'm not sure about global VIs.)

Ok. That covers save (or, rather, it covers enough of save that we can now talk about load).

When we use the phrase "a VI is in loaded into memory", we really mean, "some portion of the VI's file is in memory in a way that other VIs may link to it and it can be edited and/or executed." If you use the VI Server method "Read Linker Info", that reads the connection information of a VI without loading the VI -- so although we opened the VI file and read some data out of it, we didn't properly make the VI available to the editor and execution system. Same when the palettes load -- they read the icons out of the VIs in the directories, without actually loading the VIs.

There are N parts of a VI, where N is a number I don't remember right now. But for the purposes of this conversation, we can really think of there being just three parts: the front panel (FP), the block diagram (BD) and something I'm going to call "the linker/code block" (LC). That's not a term you'll find anywhere else because I just made it up. The LC is all the stuff that makes a VI be a VI in memory, including:

- the linker information (the list of other LV files upon which this VI depends)

- the identity of the VI (which library owns this VI)

- the compiled assembly code (unless the VI is broken, in which case there is no compiled assembly code)

- and probably some other stuff not relevant to this conversation.

In the development environment, whenever you say File >> Open and load a top-level VI, the LC and FP load into memory. If the VI was last saved as bad or broken or if it turns out to be bad/broken after it finishes loading its dependencies, then the BD also loads into memory. If that VI has subVIs, the LC for all those subVIs load into memory. The FP loads if the subVI is considered necessary (see my earlier post in this thread for things that make that necessary). Both the FP and BD are loaded if those subVIs were saved bad or broken OR if they turn out to be bad/broken once dependencies are loaded.

For all VIs, if an edit is needed during load (say, a subVI conpane changed while the caller was not in memory, or a typedef needs to update, or LV version mutation, or updating the path to a subVI), both FP and BD load.

In the runtime engine, the BD never loads. Obviously, the BD doesn't load if the VI was saved without a block diagram. In a real-time system, neither the FP nor the BD ever load.

After loading the VI (and all its dependencies), if the compiled code is missing and the block diagram is loaded and the VI is not broken/bad, then LV will go ahead and compile the VI and put a docmod on the VI (the little asterisk that means "this VI has unsaved changes").

The FP and BD will eagerly unload as soon as the reasons for them staying in memory are dealt with. So if you fix a broken VI and then save the changes, FP and BD will unload unless the windows are open or the FP is necessary for the VI.

If you want proof that the diagram loads independently, try this: Save a caller VI and its subVI. Open the caller VI. In the operating system, copy the subVI file on disk to a temp location. Back in LV, open and modify the subVI's diagram. Save the subVI and close the panel and diagram (keep the caller VI open). Now copy the temp file over the subVI file. Now open the block diagram of the subVI again. You'll get one of the more interesting dialogs that LV has to offer, noting that the diagram saved doesn't match the VI in memory and you can either choose not reload the diagram or do load the diagram which will recompile your VI for the new diagram code. If you didn't save the subVI (meaning you choose Defer Decision on the Save Changes dialog), then the diagram is already in memory and I'm pretty sure you won't get that dialog because LV doesn't bother to check disk for stuff it already has loaded.

Daklu

Say you're editing a typedef cluster and all the dependent LC blocks are loaded, but their FP/BD's are not. When the typedef's changes are applied, LV finds all the dependent vis, loads the block diagrams, and makes the necessary edits transparently to the user. (i.e. Without opening windows to the block diagrams.) Since the dependent vis are now dirty their FPs are also loaded into memory, forcing data copies for those FP controls when the app is executed, even though the FP windows aren't open, right?

Aristos Queue

Yes. All that is correct.

There's another variant -- the FP could be the thing hosting the typedef, and when the typedef changes, LV will load both the FP and the BD into memory in order to update the FP. The reason the BD loads is because the VI has to recompile to deal with the typedef change.

Daklu

Does this behavior change when separating compiled code in LV10? My initial thought is that LV ought to be able to recompile the dependent vi's source code and remove the FP/BD from memory without marking them dirty, and simply wait until the user opens that vi before giving it a dirty dot. But that could lead to confusing behavior when multiple typedef changes are applied sequentially (like what happens when a vi's LC block isn't loaded when changes are applied) so I'm guessing no.

Aristos Queue

I know that it does change. I do not know how dramatic the change is. Your analysis sounds completely plausible, but I haven't really stayed up-to-date on the source-obj splitting.

Daklu

The one open question I still don't have an answer to is why does the FP have to be loaded when the BD loaded?

Aristos Queue

Up until LV 2009, the default values of controls were stored as part of the front panel, so any time the VI wanted to recompile, the panel was actually necessary to generate the code. Nowadays the default values have been moved out of the panel, and we are inching closer to the day when the recompile could happen with just the diagram without the panel, but there are 25 years worth of code that assumes "if I have the diagram then I know the panel is in memory, so I don't have to test for NULL". It's a low priority refactoring that is ooching forward.

External Links

Original LAVA discussion.